macOS keyboard shortcuts for Windows

Are you a macOS user occasionally dealing with Windows systems or trying to switch platforms? Are you a Windows user that believes that the Windows-native keyboard shortcuts are objectively bad? Are you annoyed by something as simple as copy/pasting text not working consistently across apps?

If so, this post will equip you with an AutoHotkey configuration file that brings macOS keyboard shortcuts to Windows. Read on.

July 7, 2021

·

Tags:

macos, productivity, windows

Continue reading (about

8 minutes)

Running codesign over SSH with a new key

I just spent sometime between 30 minutes and 1 hour convincing the Mac Pro that sits in my office to successfully codesign an iOS app via Bazel. This was after having to update the signing key to a newer one and after rebooting the machine due to the macOS 10.15.5 upgrade—all remotely thanks to COVID-19.

The build of the app was failing with an errSecInternalComponent error printed by codesign. It is not the first time I face this, but in all previous cases, I had either been at the computer to click through security popups, had had functional Chrome Remote Desktop access, or did not have to install a new signing key remotely.

May 29, 2020

·

Tags:

bazel, macos

Continue reading (about

3 minutes)

macOS terminal stalls running a binary

Here I am, confined to my apartment due to the COVID-19 pandemic and without having posted anything for almost two months. Fortunately, my family and I are still are in good condition, and I’m even more fortunate to have a job that can employ me remotely without problems. Or can they?

For over a year, my team and I have been working on allowing our mobile engineers to work from their laptops (as opposed to from their powerful workstations). And guess what: now, more than ever, this has become super-important: making our engineering workforce productive when working remotely is a challenge, sure, but is also an amazing opportunity for the feature we’ve been developing for over a year.

March 23, 2020

·

Tags:

fuse, macos

Continue reading (about

6 minutes)

The /bin/bash baggage of macOS

As you may know, macOS ships with an ancient version of the Bash shell interpreter, 3.2.57. Let’s see why that is and why this is a problem.

November 20, 2019

·

Tags:

macos, shell

Continue reading (about

4 minutes)

Waiting for process groups, macOS edition

In the previous posts, we saw why waiting for a process group is complicated and we covered a specific, bullet-proof mechanism to accomplish this on Linux. Now is the time to investigate this same topic on macOS. Remember that the problem we are trying to solve (#10245) is the following: given a process group, wait for all of its processes to fully terminate.

macOS has a bunch of fancy features that other systems do not have, but process control is not among them. We do not have features like Linux’s child subreaper or PID namespaces to keep track of process groups. Therefore, we’ll have to roll our own. And the only way to do this is to scan the process table looking for processes with the desired process group identifier (PGID) and waiting until they are gone.

November 15, 2019

·

Tags:

bazel, darwin, macos, unix

Continue reading (about

8 minutes)

A quick glance at macOS' sandbox-exec

macOS includes a sandboxing mechanism to closely control what processes can do on the system. Sandboxing can restrict file system accesses on a path level, control which host/port pairs can be reached over the network, limit which binaries can be executed, and much more. All applications installed via the App Store are subject to sandboxing.

This sandboxing functionality is exposed via the sandbox-exec(1) command-line utility, which unfortunately has been listed as deprecated for at least the last two major versions of macOS. It is still there, however, and the supplemental manual pages like sandbox(7) or sandboxd(8) do not mention the deprecation… which makes me think that the new App Sandboxing feature is built on the same kernel subsystem as sandbox-exec(1).

November 1, 2019

·

Tags:

bazel, macos

Continue reading (about

3 minutes)

Optimizing tree deletions in Bazel

Bazel likes creating very deep and large trees on disk during a build. One example is the output tree, which naturally contains all the artifacts of your build. Another, more problematic example is the symlink forest trees created for every action when sandboxing is enabled. As garbage gets created, it must be deleted.

It turns out, however, that deleting file system trees can be very expensive—and especially so on macOS. In fact, calls to our deleteTree algorithm routinely showed up in my profiling runs when trying to diagnose slowdowns using the dynamic scheduler. One thing I quickly wondered is: why can I easily catch Bazel stuck in the tree deletion but I can never catch it busily creating such a tree? Is tree deletion inherently slow or are we doing something stupid?

March 22, 2019

·

Tags:

bazel, macos, performance

Continue reading (about

4 minutes)

Darwin's QoS service classes and performance

Since the publication of Bazel a few years ago, users have reported (and I myself have experienced) general slowdowns when Bazel is running on Macs: things like the window manager stutter and others like the web browser cannot load new pages. Similarly, after the introduction of the dynamic spawn scheduler, some users reported slower builds than pure remote or pure local builds, which made no sense.

All along we guessed that these problems were caused by Bazel’s abuse of system threads, as it used to spawn 200 runnable threads during analysis and used to run 200 concurrent compiler subprocesses. We tackled the problem by reducing Bazel’s abuse (e.g. commit ac88041) of system resources… and while we saw an improvement, the issue remained.

March 6, 2019

·

Tags:

bazel, featured, macos, performance

Continue reading (about

6 minutes)

Open files limit, macOS, and the JVM

Bazel’s original raison d’etre was to support Google’s monorepo. A consequence of using a monorepo is that some builds will become very large. And large builds can be very resource hungry, especially when using a tool like Bazel that tries to parallelize as many actions as possible for efficiency reasons. There are many resource types in a system, but today I’d like to focus on the number of open files at any given time (nofiles).

January 29, 2019

·

Tags:

bazel, jvm, macos, monorepo, portability

Continue reading (about

3 minutes)

Easy pkgsrc on macOS with pkg_comp 2.0

This is a tutorial to guide you through the shiny new pkg_comp 2.0 on macOS using the macOS-specific self-installer.

Goals: to use pkg_comp 2.0 to build a binary repository of all the packages you are interested in; to keep the repository fresh on a daily basis; and to use that repository with pkgin to maintain your macOS system up-to-date and secure.

February 23, 2017

·

Tags:

macos, pkg_comp, sandboxctl, software, tutorial

Continue reading (about

6 minutes)

How to add the Mac OS X screensaver to the dock

For various reasons, I have trained myself to lock my computer's screen as soon as I vacate my seat every single time. This may seem annoying to some, but once you get used to it it becomes second nature. The reason I do this is to prevent the chance of a malicious coworker (or "guest") to steal my credentials at work.

However, Mac OS X has traditionally not made this simple. As far as I know, the only easy way to manually launch the screensaver was (and still is) to define a hot corner to put the display to sleep. Frankly, I don't want a hot corner action for this, if only because I already have assigned other actions to every corner of my screen. However, an icon in the dock would be very convenient... and this post teaches you the non-obvious way to achieve it.

November 7, 2013

·

Tags:

macos

Continue reading (about

1 minute)

Reinstalled Mac OS X in multiple partitions, again

Past weekend, for some strange reason, I decided to dump all the MBP's hard disk contents and start again from scratch. But this time I decided to split the disk into multiple partitions for Mac OS X, to avoid external fragmentation slowdowns as much as possible.

I already did such a thing back when the MBP was new. At that time, I created a partition for the system files and another for the user data. However, that setup was not too optimal and, when I got the 7200RPM hard disk drive six months later, I reinstalled again in a single partition. Just for convenience.

But external fragmentation hurts performance a lot, specially in my case because I need to keep lots of small files (the NetBSD source tree, for example) and files that get fragmented very easily (sparse virtual machine disks). These end up spreading the files everywhere on the physical disk, and as a result the system slows down considerably. I even bought iDefrag and it does a good job at optimizing the disk layout... but the results were not as impressive as I expected.

This time I reinstalled using the following layout:

- System: Mounted on /, HFS+ case insensitive, 30GB.

- Users: Mounted on /Users, HFS+ case insensitive, 50GB.

- Windows: Not mounted, NTFS, 40GB.

- Projects: Mounted on /Users/jmmv/Projects, HFS+ case sensitive, 30GB.

Using this layout, the machine really feels a lot faster. Applications start quickly, programs that deal with personal data such as iPhoto and iTunes load the library faster, and I don't have to deal with stupid disk images to keep things sequential on disk. However, the price to pay for such layout is convenience, because now the free disk space is spread in multiple partitions.

July 5, 2008

·

Tags:

fragmentation, mac, macos

Continue reading (about

2 minutes)

Getting started with Cocoa

I recently subscribed to the Planet Cocoa aggregator and it has already brought me some interesting articles. Today, there was an excellent one titled Getting started with Cocoa: a friendlier approach posted at Andy Matuschak's blog: Square Signals.

This post guides you through your first steps with Cocoa. Its basic aim is making you gain enough intuition to let you guide yourself through Cocoa documentation in the future. If you have ever programmed in, e.g. Java, you know what this means: you first need to have some basic knowledge of the whole platform to get started and, at that point, you can do almost anything by driving to the API documentation and searching for what you need — even if you had no clue on how to accomplish your task before.

Attacking the in-depth documentation directly is hard because it overwhelms you with details that are not important to the beginner. Plus it does not show you the big picture.

I am by no means a Cocoa expert yet (in fact I'm very much a beginner), so this post will be extremely helpful to me, at least; thanks Andy! I hope it is to you too in case you wanted to begin programming for Mac OS X.

September 11, 2007

·

Tags:

cocoa, macos

Continue reading (about

1 minute)

Hibernating a Mac

Mac OS X has supported for a very long time putting Macs to sleep. This is a must-have feature for laptops, but is also convenient for desktop machines. However, it hasn't been since the transition to Intel-based Macs that it also supports hibernation, also called deep sleep. When entering the hibernation mode, the system stores all memory contents to disk as well as the status of the devices. It then powers off the machine completely. Later on, the on-disk copy is used to restore the machine to its previous state when it is powered on. It takes longer than resuming from sleep status, but all your applications will be there as you left them.

Now, every time you put your Intel Mac to sleep it is also preparing itself to hibernate. This is why Intel Macs take longer than PowerPC-based ones to enter the sleep mode. This way, if the machine's battery drains completely in the case of notebooks, or the machine is unplugged in the case of desktops, the machine will be able to quickly recover itself to a safe state and you won't lose data.

As I mentioned yesterday, I've been running my MacBook Pro for a while without the battery, so I had an easy chance to experiment hibernation. And it's marvelous. No flaws so far.

The thing is that I always powered down my Mac at night. The reason is that putting it to sleep during the whole night consumed few but enough battery to require a recharge next morning to bring it back to 100%, so I didn't do it. But now I usually put it to hibernate; this way, on the next boot, I can continue work straight from where I left it and I don't have to restart any applications.

Now... putting a Mac notebook into this mode is painful if you have to remove the battery every time to force it to enter hibernation mode, and unfortunately Mac OS X does not have any "Hibernate" option. But... there is this sweet DashBoard widget called Deep Sleep that lets you do exactly that! No more boots from cold state any more :-)

July 28, 2007

·

Tags:

hibernate, macos

Continue reading (about

2 minutes)

New Processor preferences panel in Mac OS X

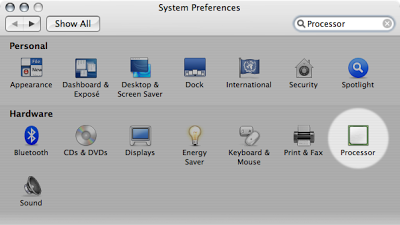

Some days ago I updated my system to the latest version of Mac OS X Tiger, 10.4.10. It hasn't been until today that I realized that there is a new cool preferences panel called Processor: It looks like this:

It looks like this: As you can see, it gives information about each processor in the machine and also lets you disable any processor you want.

As you can see, it gives information about each processor in the machine and also lets you disable any processor you want.

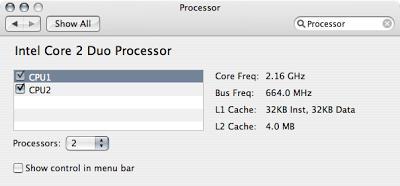

There is also another "hidden" window, accessible from the menu bar control after you have enabled it. It is called the Processor palette and looks like this: I already monitor the processor activity by using the Activity Monitor's dock icon, which is much more compact, but this one is nice :-)

I already monitor the processor activity by using the Activity Monitor's dock icon, which is much more compact, but this one is nice :-)

Edit (16:22): Rui Paulo writes in a comment that this is available if you install Xcode. It turns out I have had Xcode installed for ages, but my installation did not contain the CHUD tools. I recently added them to the system, which must be the reason behind this new item in the system preferences. So... this is not related to the 10.4.10 update I mentioned at first.

July 1, 2007

·

Tags:

macos

Continue reading (about

1 minute)

Six months with the MacBook Pro

If memory serves well, today makes the sixth month since I have got my MacBook Pro and, during this period, have been using it as my sole computer. I feel it is a good time for another mini-review.

Well... to get started: this machine is great; I probably haven't been happier with any other computer before. I have been able to work on real stuff — instead of maintaining the machine — during these months without a hitch. Strictly speaking I've got a couple of problems... but that was "my fault" for installing experimental kernel drivers.

As regards the machine's speed, which I think is the main reason why I wanted to write this post: it is pretty impressive considering it is a laptop. With a good amount of RAM, programs behave correctly and games can be played at high quality settings with a decent FPS rate. But, and everything has a "but": I really, really, really hate its hard disk (a 160 GB, 5400 RPM drive). I cannot stress that more. It's slow. Painfully slow under medium load. Seek times are horrible. That alone makes me feel I'm using a 10 year-old machine. I'm waiting for the shiny-new big 7200 RPM drives to become a bit easier to purchase and will make the switch, even if that means my battery life will be a bit shorter.

About Mac OS X... what can I say that you already don't know. It is very comfortable for daily use — although that's very subjective, of course; it's quite "funny" to read some reviews that blame OS X for not behaving exactly like Windows — and, being based on Unix, allows me to do serious development with a sane command-line environment and related tools. Parallels Desktop for Mac is my preferred tool so far as I can seamlessly work with Windows-only programs and do Linux/NetBSD development, but other free applications are equally great; some worth of mention: Adium X, Camino or QuickSilver.

At last, sometimes I miss having a desktop computer at home because watching TV series/movies on the laptop is rather annoying — I have to keep adjusting the screen's position so it's properly visible when laying on bed. I can imagine that an iMac with the included remote control and Front Row could be awesome for this specific use.

All in all, don't hesitate to buy this machine if you are considering it as a laptop or desktop replacement. But be sure to pick the new 7200 RPM drive if you will be doing any slightly-intensive disk operation.

June 21, 2007

·

Tags:

mac, macos, parallels, review

Continue reading (about

3 minutes)

Mounting volumes on Mac OS X's startup

As I mentioned yesterday, I have a couple of disk images in my Mac OS X machine that hold NetBSD's and pkgsrc's source code. I also have some virtual machines in Parallels that need to use these project's files.

In order to keep disk usage to the minimum, I share the project's disk images with the virtual machines by means of NFS. (See Mac OS X as an NFS Server for more details.) But in doing so, a problem appears: the NFS daemon is started as part of the system's boot process, long before I can manually mount the disk images. As a result, the NFS daemon — more specifically, mountd — cannot see the exported directories and assumes that their corresponding export entries are invalid. This effectively means that, after mounting the images, I have to manually send a HUP signal to mountd to refresh its export list.

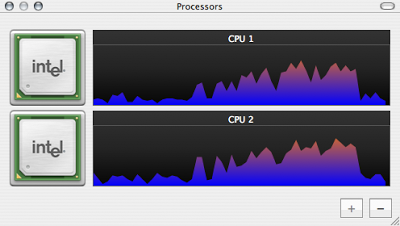

A little research will tell you that it is trivial to mount disk volumes on login by dragging their icon to the Login items section of the Accounts preference panel:

But... that doesn't solve the problem. If you do that, the images will be mounted when you log in, and that happens long after the system has spawned the NFS daemons.

Ideally, one should be able to list the disk images in /etc/fstab, just as is done with any other file system, but that does not work (or I don't know the appropriate syntax). So how do you resolve the problem? Or better said, how did I resolve it (because I doubt it's the only solution)?

It turns out it was not trivial: you need to manually write a new startup script that mounts the images for you on system startup. In order to do that, start by creating the /Library/StartupItems/DiskImages directory; this will hold the startup script as well as the necessary meta-data to tell the system what to do with it.

Then create the real script within that directory and name it DiskImages:

#! /bin/shDon't forget to grant the executable permission to that script with chmod +x DiskImages.

#

# DiskImages startup script

#

. /etc/rc.common

basePath="/Library/StartupItems/DiskImages"

StartService() {

hdiutil attach -nobrowse

/Users/jmmv/Projects/NetBSD.dmg

hdiutil attach -nobrowse

/Users/jmmv/Projects/pkgsrc.dmg

}

StopService() {

true

}

RestartService() {

true

}

RunService "$1"

At last, create the StartupParameters.plist file, also in that directory, and put the following in it:

{

Description = "Automatic attachment of disk images";

OrderPreference = "First";

Uses = ("Disks");

}And that's it! Reboot and those exported directories contained within images will be properly recognized by the NFS daemon.I'm wondering if there is a better way to resolve the issue, but so far this seems to work. Now... mmm... a UI to create and manage this script could be sweet.

April 10, 2007

·

Tags:

macos, volumes

Continue reading (about

3 minutes)

How to disable journaling on a HFS+ volume

Mac OS X’s native file system (HFS+) supports journaling, a feature that is enabled by default on all new volumes. Journaling is a very nice feature as it allows a quick recovery of the file system’s status should anything bad happen to the machine — e.g. a power failure or a crash. With a journaled file system, the operating system can easily undo or redo the last operations executed on the disk without losing meta-data, effectively avoiding a full file system check.

April 9, 2007

·

Tags:

fragmentation, hfs, journal, macos

Continue reading (about

2 minutes)

NTFS read/write support for Mac OS X

It is a fact that hard disk drives are very, very large nowadays. Formatting them as FAT (in any of its versions) is suboptimal due to the deficiencies of this file system: big clusters, lack of journaling support, etc. But, like it or not, FAT is the most compatible file system out there: virtually any OS and device supports it in read/write mode.

Today, I had to reinstall Windows XP on my Mac (won't bother you with the reasons). In the past, I had used FAT32 for its 30Gb partition so I could access it from Mac OS X. But recently, some guys at Google ported Linux's FUSE to Mac OS X, effectively allowing anyone to use FUSE modules under this operating system. And you guessed right: there is a module that provides stable, full read/write support for NTFS file systems; it's name: ntfs-3g.

So I installed Windows XP on a NTFS partition and gave these a try.

MacFUSE, as said above, is a port of Linux's FUSE kernel-level interface to Mac OS X. For those that don't know it, FUSE is a kernel facility that allows file system drivers to be run as user-space applications; this speeds up development of these components and also prevents some common programming mistakes to take the whole system down. Having such a compatible interface means that you can run almost any FUSE module under Mac OS X without changes to its code.

Installing MacFUSE is trivial, but I was afraid that using ntfs-3g could require messing with the command line — which would be soooo Mac-unlike — and feared it could not integrate well with the system (i.e. no automation nor replacement of the standard read-only driver).

It turns out I was wrong. There is a very nice NTFS-3G for Mac OS X project that provides you the typical disk image with a couple of installers to properly merge ntfs-3g support into your system. Once done, just reboot and your NTFS partition will automatically come up in the Finder as a read/write volume! Sweet. Kudos to the developers that made this work.

Oh, and by the way. We have got FUSE support in NetBSD too!

March 17, 2007

·

Tags:

fuse, macos, ntfs

Continue reading (about

2 minutes)

Mac OS X aliases and symbolic links

Even though aliases and symbolic links may seem to be the same thing in Mac OS X, this is not completely true. One could think they are effectively the same because, with the switch to a Unix base in version 10.0, symbolic links became a “normal thing” in the system. However, for better or worse, differences still remain; let’s see them.

Symbolic links can only be created from the command line by using the ln(1) utility. Once created, the Finder will represent them as aliases (with a little arrow in their icon’s lower-left corner) and treat them as such. A symbolic link is stored on disk as a non-empty file which contains the full or relative path to the file it points to; then, the link’s inode is marked as special by activating its symbolic link flag. (This is how things work in UFS; I suspect HFS+ is similar, but cannot confirm it.)

January 20, 2007

·

Tags:

macos

Continue reading (about

2 minutes)

Hide a volume in Mac OS X

Yesterday, we saw how to install Mac OS X over multiple volumes, but there is a minor glitch in doing so: all "extra" volumes will appear as different drives in the Finder, which means additional icons on the desktop and its windows' sidebars. I find these items useless: why should I care about a part of the file system being stored in a different partition? (Note that this has nothing to do with icons for removable media and external drives, a these really are useful.)

The removal of the extra volumes from the sidebars is trivial: just right-click (or Control+click) on the drive entry and select the Remove from Sidebar option.

But how to deal with the icons on the desktop? One possibility is to open the Finder's preferences and tell it to not show entries for hard disks. The downside is that all direct accesses to the file system will disappear, including those that represent external disks.

A slightly better solution is to mark the volume's mount point as hidden, which will effectively make it invisible to the Finder. To do this you have to set the invisible extended attribute on the folder by using the SetFile(1) utility (stored in /Developer/Tools, thus included in Xcode). For example, to hide our example /Users mount point:

# /Developer/Tools/SetFile -a V /UsersYou'll need to relaunch the Finder for the changes to take effect.

The above is not perfect, though: the mount point will be hidden from all Finder windows, not only from the desktop. I don't know if there is any better way to achieve this, but this one does the trick...

January 16, 2007

·

Tags:

macos

Continue reading (about

2 minutes)

Install Mac OS X over multiple volumes

As you may already know, Mac OS X is a Unix-like system based on BSD and Mach. Among other things, this means that there is a single virtual file system on which you can attach new volumes by means of mount points and the mount(8) utility. One could consider partitioning a disk to place specific system areas in different partitions to prevent the degradation of each file system, but the installer does not let you do this (I suspect the one for Mac OS X Server might have this feature, but this is just a guess). Being Unix, this doesn't mean it isn't possible!

As a demonstration, I explain here how to install Mac OS X so that the system files are placed in one partition and the users' home directories are in another one. This setup keeps mostly-static system data self contained in a specific area of the disk and allows you to do a clean system reinstall without losing your data nor settings.

First of all boot the installation from the first DVD and execute the Disk Utility from the Utilities menu. There you can partition your disk as you want, so create a partition for the system and one for the users; let's call them System and Users respectively, being on the disk0s2 and disk0s3 devices. Both should be HFS+, but you can choose whether you want journaling and/or case sensitivity independently. Exit the tool and go back to the installer.

Now do a regular install on the System volume, ignoring the existence of Users. To make things simple, go through the whole installation, including the welcome wizard. Once you are in the default desktop, get ready for the tricky stuff.

Reboot your machine and enter single user mode by pressing and holding Command+s just after the initial chime sound. At the command line, follow the instructions to remount the root volume as read/write. If I recall correctly, it tells you to do:

# fsck -fy /Mount the Users volume in a temporary location, copy the current contents of /Users into it and remove the original files. For example:

# mount -u rw /

# mkdir /Users2I used rsync(1) instead of cp(1) because it preserves the files' resource forks, if present (provided you give it the -E option).

# mount -t hfs /dev/disk0s3 /Users2

# rsync -avE /Users/ /Users2

... ensure that /Users2 matches /Users ...

# rm -rf /Users/.[a-zA-Z_]* /Users/*

# umount /Users2

# rmdir /Users2

Once the data migration is done, you can proceed to tell the system to mount the Users volume in the appropriate place on the next boot. Create the /etc/fstab file and add the following line to it:

/dev/disk0s3 /Users hfs rwEnsure it works by mounting it by hand:

# mount /UsersIf no problems arise, you're done! Reboot and enjoy your new system.

The only problem with the above strategy is that your root volume must be big enough to hold the whole installation before you can reorganize the partitions. I haven't tried it but maybe, just maybe, you could do some manual mounts from within the Terminal available in the installer. That way you'd set up the desired mount layout before any files are copied, delivering the appropriate results. Note that if this worked, you'd still need to do the fstab trick in this case, but you'd have to do it on the very first reboot, even before the install is complete!

January 15, 2007

·

Tags:

macos

Continue reading (about

3 minutes)

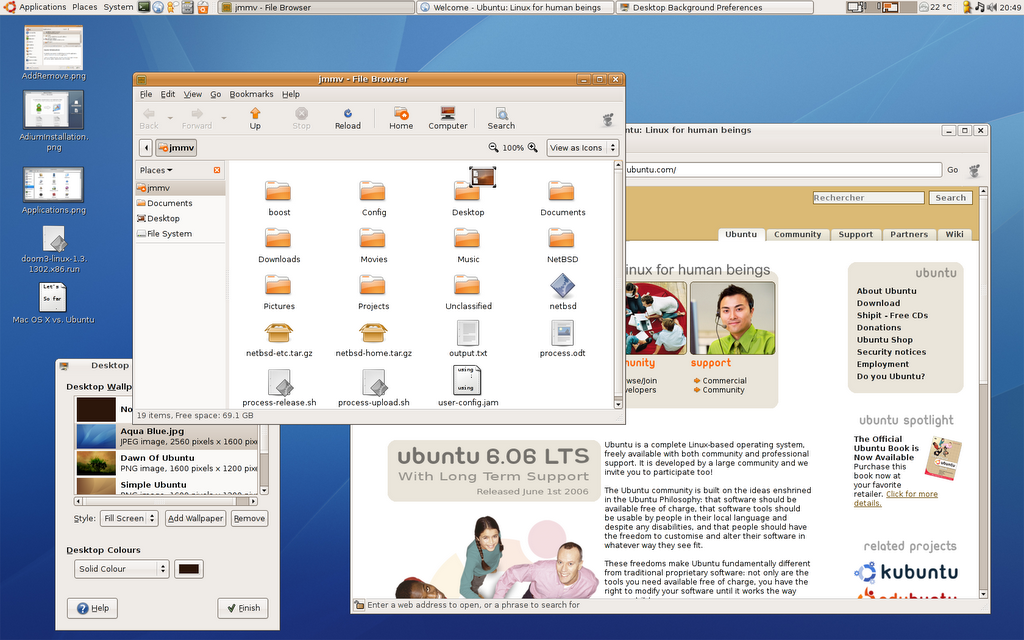

Mac OS X vs. Ubuntu: Summary

I think I’ve already covered all the areas I had in mind about these two operating systems. And as the thread has lasted for too long, I’m concluding it now. Here is a summary of all items described:

- 2006-09-28: Introduction

- 2006-09-29: Hardware support

- 2006-09-30: The environment

- 2006-10-01: Software installation

- 2006-10-02: Automatic updates

- 2006-10-03: Freedom

- 2006-10-07: Commercial software

- 2006-10-20: Development platform

- 2006-10-25: Summary

After all these notes I still can’t decide which operating system I’d prefer based on quality, features and cost. Nowadays I’m quite happy with Kubuntu (installed it to see how it works after breaking Ubuntu and it seems good so far) and I’ll possibly stick to it for some more months.

October 25, 2006

·

Tags:

macos, ubuntu

Continue reading (about

1 minute)

Mac OS X vs. Ubuntu: Development platform

First of all, sorry for not completing the comparison between systems earlier. I had to work on some university assignments and started to play a bit with Haskell, which made me start a rewrite of an utility (more on this soon, I hope!).

Let’s now compare the development platform provided by these operating systems. This is something most end users will not ever care about, but it certainly affects the availability of some applications (specially commercial ones), their future evolution and how the applications work e.g. during installation.

October 20, 2006

·

Tags:

macos, ubuntu

Continue reading (about

5 minutes)

Mac OS X vs. Ubuntu: Commercial software

As much as we may like free software, there is a lot of interesting commercial applications out there (be them free as in free beer or not). Given the origins and spirit of each OS, the amount of commercial applications available for them is vastly different.

Let’s start with Ubuntu (strictly speaking, Linux). Although trends are slowly changing, the number of commercial programs that are addressed to Linux systems is really small. I’ve reasons to believe that this is because Linux, as a platform to provide such applications, is awful. We already saw an example of this in the software installation comparison because third-party applications have a hard path to distribute their software under the Linux world. We will see more examples about this soon in another post.

October 7, 2006

·

Tags:

macos, ubuntu

Continue reading (about

3 minutes)

Mac OS X vs. Ubuntu: Freedom

Ubuntu is based on Debian GNU/Linux, a free (as in free beer and free speech) Linux-based distribution and the free GNOME desktop environment. Therefore it keeps the philosophy of the two, being itself also free. Summarizing, this means that the user can legally modify and copy the system at will, without having to pay anyone for doing so. When things break, it is great to be able to look at the source code, find the problem and fix it yourself; of course, this is not something that end users will ever do, but I have found this situation valuable many times (not under Ubuntu though).

October 3, 2006

·

Tags:

macos, ubuntu

Continue reading (about

2 minutes)

Mac OS X vs. Ubuntu: Automatic updates

Security and/or bug fixes, new features… all those are very common in newer versions of applications—and this obviously includes the operating system itself. A desktop OS should provide a way to painlessly update your system (and possibly your applications) to the latest available versions; the main reason is to be safe to exploits that could damage your software and/or data.

Both Mac OS X and Ubuntu provide tools to keep themselves updated and, to some extent, their applications too. These utilities include an automated way to schedule updates, which is important to avoid leaving a system unpatched against important security updates. Let’s now drill down the two OSes a bit more.

October 2, 2006

·

Tags:

macos, ubuntu

Continue reading (about

3 minutes)

Mac OS X vs. Ubuntu: Software installation

Installing software under a desktop OS should be a quick and easy task. Although systems such as pkgsrc—which build software from its source code—are very convenient some times, they get really annoying on desktops because problems pop up more often than desired and builds take hours to complete. In my opinion, a desktop end user must not ever need to build software by himself; if he needs to, someone in the development chain failed. Fortunately the two systems I’m comparing seem to have resolved this issue: all general software is available in binary form.

October 1, 2006

·

Tags:

macos, ubuntu

Continue reading (about

4 minutes)

Mac OS X vs. Ubuntu: The environment

I’m sure you are already familiar with the desktop environments of both operating systems so I’m just going to outline here the most interesting aspects of each one. Some details will be left for further posts as they are interesting enough on their own. Here we go:

Ubuntu, being yet another GNU/Linux distribution, uses one of the desktop environments available for this operating system, namely GNOME. GNOME aims to be an environment that is easy to use and doesn’t get in your way; they are achieving it. There are several details that remind us of Windows more than Mac OS X: for example, we have got a task bar on the bottom panel (that is, pardon me, a real mess due to its annoying behavior) and a menu bar that is tied to the window it belongs to.

September 30, 2006

·

Tags:

macos, ubuntu

Continue reading (about

4 minutes)

Mac OS X vs. Ubuntu: Hardware support

Let’s start our comparison by analyzing the quality of hardware support under each OS. In order to be efficient, a desktop OS needs to handle most of the machine’s hardware out of the box with no user intervention. It also has to deal with hotplug events transparently so that pen drives, cameras, MP3 players, etc. can be connected and start to work magically. We can’t forget power management, which is getting more and more important lately even on desktop systems: being able to suspend the machine during short breaks instead of powering it down is extremely convenient.

September 29, 2006

·

Tags:

macos, ubuntu

Continue reading (about

3 minutes)

Mac OS X vs. Ubuntu: Introduction

About a week ago, my desktop machine was driving me crazy because I couldn’t comfortably work on anything other than NetBSD and pkgsrc themselves. With “other work” I’m referring to Boost.Process and, most importantly, university assignments. Given my painless experience with the iBook G4 laptop I’ve had for around a year, I was decided to replace the desktop machine with a Mac—most likely a brand-new iMac 20"—to run Mac OS X on top of it exclusively—OK, OK, alongside Windows XP to satisfy the eventual willingness to play some games.

September 28, 2006

·

Tags:

boost-process, macos, ubuntu

Continue reading (about

2 minutes)